Computer science

Computer science is the study of manipulating, managing, transforming and encoding information.

There are many different areas in computer science. Some areas consider problems in an abstract manner, while some need special machines, called computers.

A person who works with computers will often need mathematics,[1] science, and logic in order to design and work with computers.

History[edit]

| History of computing |

|---|

| Hardware |

| Software |

| Computer science |

| Modern concepts |

| By country |

| Timeline of computing |

| Glossary of computer science |

The earliest foundations of what would become computer science predate the invention of the modern digital computer. Machines for calculating fixed numerical tasks such as the abacus have existed since antiquity, aiding in computations such as multiplication and division. Algorithms for performing computations have existed since antiquity, even before the development of sophisticated computing equipment.

Wilhelm Schickard designed and constructed the first working mechanical calculator in 1623.[4] In 1673, Gottfried Leibniz demonstrated a digital mechanical calculator, called the Stepped Reckoner.[5] Leibniz may be considered the first computer scientist and information theorist, for, among other reasons, documenting the binary number system. In 1820, Thomas de Colmar launched the mechanical calculator industry[note 1] when he invented his simplified arithmometer, the first calculating machine strong enough and reliable enough to be used daily in an office environment. Charles Babbage started the design of the first automatic mechanical calculator, his Difference Engine, in 1822, which eventually gave him the idea of the first programmable mechanical calculator, his Analytical Engine.[6] He started developing this machine in 1834, and "in less than two years, he had sketched out many of the salient features of the modern computer".[7] "A crucial step was the adoption of a punched card system derived from the Jacquard loom"[7] making it infinitely programmable.[note 2] In 1843, during the translation of a French article on the Analytical Engine, Ada Lovelace wrote, in one of the many notes she included, an algorithm to compute the Bernoulli numbers, which is considered to be the first published algorithm ever specifically tailored for implementation on a computer.[8] Around 1885, Herman Hollerith invented the tabulator, which used punched cards to process statistical information; eventually his company became part of IBM. Following Babbage, although unaware of his earlier work, Percy Ludgate in 1909 published [9] the 2nd of the only two designs for mechanical analytical engines in history. In 1937, one hundred years after Babbage's impossible dream, Howard Aiken convinced IBM, which was making all kinds of punched card equipment and was also in the calculator business[10] to develop his giant programmable calculator, the ASCC/Harvard Mark I, based on Babbage's Analytical Engine, which itself used cards and a central computing unit. When the machine was finished, some hailed it as "Babbage's dream come true".[11]

During the 1940s, with the development of new and more powerful computing machines such as the Atanasoff–Berry computer and ENIAC, the term computer came to refer to the machines rather than their human predecessors.[12] As it became clear that computers could be used for more than just mathematical calculations, the field of computer science broadened to study computation in general. In 1945, IBM founded the Watson Scientific Computing Laboratory at Columbia University in New York City. The renovated fraternity house on Manhattan's West Side was IBM's first laboratory devoted to pure science. The lab is the forerunner of IBM's Research Division, which today operates research facilities around the world.[13] Ultimately, the close relationship between IBM and the university was instrumental in the emergence of a new scientific discipline, with Columbia offering one of the first academic-credit courses in computer science in 1946.[14] Computer science began to be established as a distinct academic discipline in the 1950s and early 1960s.[15][16] The world's first computer science degree program, the Cambridge Diploma in Computer Science, began at the University of Cambridge Computer Laboratory in 1953. The first computer science department in the United States was formed at Purdue University in 1962.[17] Since practical computers became available, many applications of computing have become distinct areas of study in their own rights.

Etymology[edit]

Although first proposed in 1956,[18] the term "computer science" appears in a 1959 article in Communications of the ACM,[19] in which Louis Fein argues for the creation of a Graduate School in Computer Sciences analogous to the creation of Harvard Business School in 1921,[20] justifying the name by arguing that, like management science, the subject is applied and interdisciplinary in nature, while having the characteristics typical of an academic discipline.[19] His efforts, and those of others such as numerical analyst George Forsythe, were rewarded: universities went on to create such departments, starting with Purdue in 1962.[21] Despite its name, a significant amount of computer science does not involve the study of computers themselves. Because of this, several alternative names have been proposed.[22] Certain departments of major universities prefer the term computing science, to emphasize precisely that difference. Danish scientist Peter Naur suggested the term datalogy,[23] to reflect the fact that the scientific discipline revolves around data and data treatment, while not necessarily involving computers. The first scientific institution to use the term was the Department of Datalogy at the University of Copenhagen, founded in 1969, with Peter Naur being the first professor in datalogy. The term is used mainly in the Scandinavian countries. An alternative term, also proposed by Naur, is data science; this is now used for a multi-disciplinary field of data analysis, including statistics and databases.

In the early days of computing, a number of terms for the practitioners of the field of computing were suggested in the Communications of the ACM—turingineer, turologist, flow-charts-man, applied meta-mathematician, and applied epistemologist.[24] Three months later in the same journal, comptologist was suggested, followed next year by hypologist.[25] The term computics has also been suggested.[26] In Europe, terms derived from contracted translations of the expression "automatic information" (e.g. "informazione automatica" in Italian) or "information and mathematics" are often used, e.g. informatique (French), Informatik (German), informatica (Italian, Dutch), informática (Spanish, Portuguese), informatika (Slavic languages and Hungarian) or pliroforiki (πληροφορική, which means informatics) in Greek. Similar words have also been adopted in the UK (as in the School of Informatics of the University of Edinburgh).[27] "In the U.S., however, informatics is linked with applied computing, or computing in the context of another domain."[28]

A folkloric quotation, often attributed to—but almost certainly not first formulated by—Edsger Dijkstra, states that "computer science is no more about computers than astronomy is about telescopes."[note 3] The design and deployment of computers and computer systems is generally considered the province of disciplines other than computer science. For example, the study of computer hardware is usually considered part of computer engineering, while the study of commercial computer systems and their deployment is often called information technology or information systems. However, there has been much cross-fertilization of ideas between the various computer-related disciplines. Computer science research also often intersects other disciplines, such as philosophy, cognitive science, linguistics, mathematics, physics, biology, Earth science, statistics, and logic.

Computer science is considered by some to have a much closer relationship with mathematics than many scientific disciplines, with some observers saying that computing is a mathematical science.[15] Early computer science was strongly influenced by the work of mathematicians such as Kurt Gödel, Alan Turing, John von Neumann, Rózsa Péter and Alonzo Church and there continues to be a useful interchange of ideas between the two fields in areas such as mathematical logic, category theory, domain theory, and algebra.[18]

The academic, political, and funding aspects of computer science tend to depend on whether a department is formed with a mathematical emphasis or with an engineering emphasis. Computer science departments with a mathematics emphasis and with a numerical orientation consider alignment with computational science. Both types of departments tend to make efforts to bridge the field educationally if not across all research.

Common tasks for a computer scientist[edit]

Asking questions[edit]

This is so people can find new and easier ways to do things, and the way to approach problems with this information.

While computers can do some things easily (like simple math, or sorting out a list of names from A-to-Z), computers cannot answer questions when there is not enough information, or when there is no real answer. Also, computers may take too much time to finish long tasks. For example, it may take too long to find the shortest way through all of the towns in the USA - so instead a computer will try to make a close guess. A computer will answer these simpler questions much faster.

The relationship between Computer Science and Software Engineering is a contentious issue, which is further muddied by disputes over what the term "Software Engineering" means, and how computer science is defined.[29] David Parnas, taking a cue from the relationship between other engineering and science disciplines, has claimed that the principal focus of computer science is studying the properties of computation in general, while the principal focus of software engineering is the design of specific computations to achieve practical goals, making the two separate but complementary disciplines.[30]

Philosophy[edit]

A number of computer scientists have argued for the distinction of three separate paradigms in computer science. Peter Wegner argued that those paradigms are science, technology, and mathematics.[31] Peter Denning's working group argued that they are theory, abstraction (modeling), and design.[32] Amnon H. Eden described them as the "rationalist paradigm" (which treats computer science as a branch of mathematics, which is prevalent in theoretical computer science, and mainly employs deductive reasoning), the "technocratic paradigm" (which might be found in engineering approaches, most prominently in software engineering), and the "scientific paradigm" (which approaches computer-related artifacts from the empirical perspective of natural sciences, identifiable in some branches of artificial intelligence).[33] Computer science focuses on methods involved in design, specification, programming, verification, implementation and testing of human-made computing systems.[34]

Fields[edit]

Computer science is no more about computers than astronomy is about telescopes.

As a discipline, computer science spans a range of topics from theoretical studies of algorithms and the limits of computation to the practical issues of implementing computing systems in hardware and software.[35][36] CSAB, formerly called Computing Sciences Accreditation Board—which is made up of representatives of the Association for Computing Machinery (ACM), and the IEEE Computer Society (IEEE CS)[37]—identifies four areas that it considers crucial to the discipline of computer science: theory of computation, algorithms and data structures, programming methodology and languages, and computer elements and architecture. In addition to these four areas, CSAB also identifies fields such as software engineering, artificial intelligence, computer networking and communication, database systems, parallel computation, distributed computation, human–computer interaction, computer graphics, operating systems, and numerical and symbolic computation as being important areas of computer science.[35]

Theoretical computer science[edit]

Theoretical Computer Science is mathematical and abstract in spirit, but it derives its motivation from the practical and everyday computation. Its aim is to understand the nature of computation and, as a consequence of this understanding, provide more efficient methodologies.

Theory of computation[edit]

According to Peter Denning, the fundamental question underlying computer science is, "What can be automated?"[15] Theory of computation is focused on answering fundamental questions about what can be computed and what amount of resources are required to perform those computations. In an effort to answer the first question, computability theory examines which computational problems are solvable on various theoretical models of computation. The second question is addressed by computational complexity theory, which studies the time and space costs associated with different approaches to solving a multitude of computational problems.

The famous P = NP? problem, one of the Millennium Prize Problems,[38] is an open problem in the theory of computation.

|

|

| |

| Automata theory | Formal languages | Computability theory | Computational complexity theory |

|

|

|

|

| Models of computation | Quantum computing theory | Logic circuit theory | Cellular automata |

Information and coding theory[edit]

Information theory, closely related to probability and statistics, is related to the quantification of information. This was developed by Claude Shannon to find fundamental limits on signal processing operations such as compressing data and on reliably storing and communicating data.[39] Coding theory is the study of the properties of codes (systems for converting information from one form to another) and their fitness for a specific application. Codes are used for data compression, cryptography, error detection and correction, and more recently also for network coding. Codes are studied for the purpose of designing efficient and reliable data transmission methods. [40]

|

|

|

|

|

| Coding theory | Channel capacity | Algorithmic information theory | Signal detection theory | Kolmogorov complexity |

Data structures and algorithms[edit]

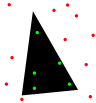

Data structures and algorithms are the studies of commonly used computational methods and their computational efficiency.

| O(n2) |

|

|

|

|

|

| Analysis of algorithms | Algorithm design | Data structures | Combinatorial optimization | Computational geometry | Randomized algorithms |

Programming language theory and formal methods[edit]

Programming language theory is a branch of computer science that deals with the design, implementation, analysis, characterization, and classification of programming languages and their individual features. It falls within the discipline of computer science, both depending on and affecting mathematics, software engineering, and linguistics. It is an active research area, with numerous dedicated academic journals.

Formal methods are a particular kind of mathematically based technique for the specification, development and verification of software and hardware systems.[41] The use of formal methods for software and hardware design is motivated by the expectation that, as in other engineering disciplines, performing appropriate mathematical analysis can contribute to the reliability and robustness of a design. They form an important theoretical underpinning for software engineering, especially where safety or security is involved. Formal methods are a useful adjunct to software testing since they help avoid errors and can also give a framework for testing. For industrial use, tool support is required. However, the high cost of using formal methods means that they are usually only used in the development of high-integrity and life-critical systems, where safety or security is of utmost importance. Formal methods are best described as the application of a fairly broad variety of theoretical computer science fundamentals, in particular logic calculi, formal languages, automata theory, and program semantics, but also type systems and algebraic data types to problems in software and hardware specification and verification.

|

|

|

|

| |

| Formal semantics | Type theory | Compiler design | Programming languages | Formal verification | Automated theorem proving |

Computer systems and computational processes[edit]

Artificial intelligence[edit]

Artificial intelligence (AI) aims to or is required to synthesize goal-orientated processes such as problem-solving, decision-making, environmental adaptation, learning, and communication found in humans and animals. From its origins in cybernetics and in the Dartmouth Conference (1956), artificial intelligence research has been necessarily cross-disciplinary, drawing on areas of expertise such as applied mathematics, symbolic logic, semiotics, electrical engineering, philosophy of mind, neurophysiology, and social intelligence. AI is associated in the popular mind with robotic development, but the main field of practical application has been as an embedded component in areas of software development, which require computational understanding. The starting point in the late 1940s was Alan Turing's question "Can computers think?", and the question remains effectively unanswered, although the Turing test is still used to assess computer output on the scale of human intelligence. But the automation of evaluative and predictive tasks has been increasingly successful as a substitute for human monitoring and intervention in domains of computer application involving complex real-world data.

Computer architecture and organization[edit]

Computer architecture, or digital computer organization, is the conceptual design and fundamental operational structure of a computer system. It focuses largely on the way by which the central processing unit performs internally and accesses addresses in memory.[42] Computer engineers study computational logic and design of computer hardware, from individual processor components, microcontrollers, personal computers to supercomputers and embedded systems. The term “architecture” in computer literature can be traced to the work of Lyle R. Johnson and Frederick P. Brooks, Jr., members of the Machine Organization department in IBM's main research center in 1959.

Concurrent, parallel and distributed computing[edit]

Concurrency is a property of systems in which several computations are executing simultaneously, and potentially interacting with each other.[43] A number of mathematical models have been developed for general concurrent computation including Petri nets, process calculi and the Parallel Random Access Machine model.[44] When multiple computers are connected in a network while using concurrency, this is known as a distributed system. Computers within that distributed system have their own private memory, and information can be exchanged to achieve common goals.[45]

Computer networks[edit]

This branch of computer science aims to manage networks between computers worldwide.

Computer security and cryptography[edit]

Computer security is a branch of computer technology with the objective of protecting information from unauthorized access, disruption, or modification while maintaining the accessibility and usability of the system for its intended users. Cryptography is the practice and study of hiding (encryption) and therefore deciphering (decryption) information. Modern cryptography is largely related to computer science, for many encryption and decryption algorithms are based on their computational complexity.

Databases and data mining[edit]

A database is intended to organize, store, and retrieve large amounts of data easily. Digital databases are managed using database management systems to store, create, maintain, and search data, through database models and query languages. Data mining is a process of discovering patterns in large data sets.

Computer graphics and visualization[edit]

Computer graphics is the study of digital visual contents and involves the synthesis and manipulation of image data. The study is connected to many other fields in computer science, including computer vision, image processing, and computational geometry, and is heavily applied in the fields of special effects and video games.

|

|

|

|

| |

| 2D computer graphics | Computer animation | Rendering | Mixed reality | Virtual reality | Solid modeling |

Image and sound processing[edit]

Information can take the form of images, sound, video or other multimedia. Bits of information can be streamed via signals. Its processing is the central notion of informatics, the European view on computing, which studies information processing algorithms independently of the type of information carrier - whether it is electrical, mechanical or biological. This field plays important role in information theory, telecommunications, information engineering and has applications in medical image computing and speech synthesis, among others. What is the lower bound on the complexity of fast Fourier transform algorithms? is one of unsolved problems in theoretical computer science.

|

|

|

|

|

|

| FFT algorithms | Image processing | Speech recognition | Data compression | Medical image computing | Speech synthesis |

Applied computer science[edit]

Computational science, finance and engineering[edit]

Scientific computing (or computational science) is the field of study concerned with constructing mathematical models and quantitative analysis techniques and using computers to analyze and solve scientific problems. A major usage of scientific computing is simulation of various processes, including computational fluid dynamics, physical, electrical, and electronic systems and circuits, as well as societies and social situations (notably war games) along with their habitats, among many others. Modern computers enable optimization of such designs as complete aircraft. Notable in electrical and electronic circuit design are SPICE,[46] as well as software for physical realization of new (or modified) designs. The latter includes essential design software for integrated circuits.[citation needed]

|

|

|

|

|

|

|

|

|

| Numerical analysis | Computational physics | Computational chemistry | Bioinformatics | Neuroinformatics | Psychoinformatics | Medical informatics | Computational engineering | Computational musicology |

Social computing and human-computer interaction[edit]

Social computing is an area that is concerned with the intersection of social behavior and computational systems. Human-computer interaction research develops theories, principles, and guidelines for user interface designers.

Software engineering[edit]

Software engineering is the study of designing, implementing, and modifying the software in order to ensure it is of high quality, affordable, maintainable, and fast to build. It is a systematic approach to software design, involving the application of engineering practices to software. Software engineering deals with the organizing and analyzing of software—it doesn't just deal with the creation or manufacture of new software, but its internal arrangement and maintenance. For example software testing, systems engineering, technical debt and software development processes.

Discoveries[edit]

The philosopher of computing Bill Rapaport noted three Great Insights of Computer Science:[47]

- Gottfried Wilhelm Leibniz's, George Boole's, Alan Turing's, Claude Shannon's, and Samuel Morse's insight: there are only two objects that a computer has to deal with in order to represent "anything".[note 4]

- All the information about any computable problem can be represented using only 0 and 1 (or any other bistable pair that can flip-flop between two easily distinguishable states, such as "on/off", "magnetized/de-magnetized", "high-voltage/low-voltage", etc.).

- Alan Turing's insight: there are only five actions that a computer has to perform in order to do "anything".

- Every algorithm can be expressed in a language for a computer consisting of only five basic instructions:[48]

- move left one location;

- move right one location;

- read symbol at current location;

- print 0 at current location;

- print 1 at current location.

- Every algorithm can be expressed in a language for a computer consisting of only five basic instructions:[48]

- Corrado Böhm and Giuseppe Jacopini's insight: there are only three ways of combining these actions (into more complex ones) that are needed in order for a computer to do "anything".[49]

- Only three rules are needed to combine any set of basic instructions into more complex ones:

- sequence: first do this, then do that;

- selection: IF such-and-such is the case, THEN do this, ELSE do that;

- repetition: WHILE such-and-such is the case, DO this.

- Note that the three rules of Boehm's and Jacopini's insight can be further simplified with the use of goto (which means it is more elementary than structured programming).

- Only three rules are needed to combine any set of basic instructions into more complex ones:

Answering the question[edit]

Algorithms are a specific set of instructions or steps on how to complete a task. For example, a computer scientist wants to sort playing cards. There are many ways to sort them - by suits (diamonds, clubs, hearts, and spades) or by numbers (2, 3, 4, 5, 6, 7, 8, 9, 10, Jack, Queen, King, and Ace). By deciding on a set of steps to sort the cards, the scientist has created an algorithm. The scientist then needs to test whether this algorithm works. This shows how well and how fast the algorithm sorts cards.

A simple but slow algorithm is: pick up two cards and check whether they are sorted correctly. If they are not, reverse them. Then do it again with another two, and repeat them all until they are all sorted. This "bubble sort" method will work, but it will take a very long time.

A better algorithm is: find the first card with the smallest suit and smallest number (2 of diamonds), and place it at the start. After this, look for the second card, and so on. This algorithm is much faster, and does not need much space. This algorithm is a "selection sort".

Ada Lovelace wrote the first computer algorithm in 1843, for a computer that was never finished. Computers began during World War II.[50] Computer science separated from the other sciences during the 1960s and 1970s. Now, computer science has its own methods, and has its own technical terms. It is related to electrical engineering, mathematics, and language science.

Computer science looks at the theoretical parts of computers. Computer engineering looks at the physical parts of computers (hardware). Software engineering looks at the use of computer programs and how to make them.

Parts of computer science[edit]

Central math[edit]

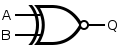

- Boolean algebra (when something can only be true or false)

- Computer numbering formats (how computers count)

- Discrete mathematics (math with numbers a person can count)

- Symbolic logic (clear ways of talking about math)

- Order of operations (which math operations are performed first)

How an ideal computer works[edit]

- Algorithmic information theory (how easily can a computer answer a question?)

- Complexity theory (how much time and memory does a computer need to answer a question?)

- Computability theory (can a computer do something?)

- Information theory (math that looks at data and how to process data)

- Theory of computation (how to answer questions on a computer using algorithms)

- Graph theory (math that looks for directions from one point to another)

- Type theory (what kinds of data should computers work with?)

- Denotational semantics (math for computer languages)

- Algorithms (looks at how to answer a question)

- Compilers (turning words into computer programs)

- Lexical analysis (how to turn words into data)

- Microprogramming (how to control the most important part of a computer)

- Operating systems (big computer programs, e.g. Linux, Microsoft Windows, Mac OS) to control the computer hardware and software.

- Cryptography (hiding data)

- Parallel computing (many instructions are carried out simultaneously)

Computer science at work[edit]

- Artificial intelligence (making computers learn and talk, similar to people)

- Computer algebra (using computers for Mathematical problems)

- Computer architecture (building a computer)

- Computer graphics (making pictures with computers)

- Computer networks (joining computers to other computers)

- Computer program (how to tell a computer to do something)

- Computer programming (writing, or making, computer programs)

- Computer security (making computers and their data safe)

- Databases (a way to sort and keep data)

- Data structure (how to build or group data)

- Distributed computing (using more than one computer to solve a difficult problem)

- Information retrieval (getting data back from a computer)

- Programming languages (languages that a programmer uses to make computer programs)

- Program specification (what a program is supposed to do)

- Program verification (making sure a computer program does what it should do, see debugging)

- Robots (using computers to control machines)

- Software engineering (how programmers write programs)

What computer science does[edit]

- Benchmark (testing a computer's power or speed)

- Computer vision (how computers can see and understand images)

- Collision detection (how computers help robots move without hitting something)

- Data compression (making data smaller)

- Data structures (how computers group and sort data)

- Data acquisition (putting data into computers)

- Design patterns (answers to common software engineering problems)

- Digital signal processing (cleaning and "looking" at data)

- File formats (how a file is arranged)

- Human-computer interaction (how humans use computers)

- Information security (keeping data safe from other people)

- Internet (a large network that joins almost all computers)

- Web applications (computer programs on the Internet)

- Optimization (making computer programs work better)

- Software metrics (ways to measure computer programs, such as counting lines of code or number of operations)

- VLSI design (the making of a very large and complex computer system)

Related pages[edit]

References[edit]

- ↑ Rana, S. (2021, May 4). Computer number systems: Binary, decimal, octal. eVidyalam. Retrieved January 12, 2022.

- ↑ "Charles Babbage Institute: Who Was Charles Babbage?". cbi.umn.edu. Retrieved 28 December 2016.

- ↑ "Ada Lovelace | Babbage Engine | Computer History Museum". www.computerhistory.org. Retrieved 28 December 2016.

- ↑ "Wilhelm Schickard – Ein Computerpionier" (PDF) (in Deutsch).

- ↑ Keates, Fiona (25 June 2012). "A Brief History of Computing". The Repository. The Royal Society.

- ↑ "Science Museum, Babbage's Analytical Engine, 1834-1871 (Trial model)". Retrieved 2020-05-11.

- ↑ 7.0 7.1 Anthony Hyman (1982). Charles Babbage, pioneer of the computer.

- ↑ "A Selection and Adaptation From Ada's Notes found in Ada, The Enchantress of Numbers," by Betty Alexandra Toole Ed.D. Strawberry Press, Mill Valley, CA". Archived from the original on February 10, 2006. Retrieved 4 May 2006.

- ↑ "The John Gabriel Byrne Computer Science Collection" (PDF). Archived from the original on April 16, 2019. Retrieved August 8, 2019.

- ↑ "In this sense Aiken needed IBM, whose technology included the use of punched cards, the accumulation of numerical data, and the transfer of numerical data from one register to another", Bernard Cohen, p.44 (2000)

- ↑ Brian Randell, p. 187, 1975

- ↑ The Association for Computing Machinery (ACM) was founded in 1947.

- ↑ "IBM Archives: 1945". Ibm.com. Retrieved 2019-03-19.

- ↑ "IBM100 – The Origins of Computer Science". Ibm.com. 1995-09-15. Retrieved 2019-03-19.

- ↑ 15.0 15.1 15.2 Denning, Peter J. (2000). "Computer Science: The Discipline" (PDF). Encyclopedia of Computer Science. Archived from the original (PDF) on May 25, 2006.

- ↑ "Some EDSAC statistics". University of Cambridge. Retrieved 19 November 2011.

- ↑ "Computer science pioneer Samuel D. Conte dies at 85". Purdue Computer Science. July 1, 2002. Retrieved December 12, 2014.

- ↑ 18.0 18.1 Tedre, Matti (2014). The Science of Computing: Shaping a Discipline. Taylor and Francis / CRC Press.

- ↑ 19.0 19.1 Louis Fine (1959). "The Role of the University in Computers, Data Processing, and Related Fields". Communications of the ACM. 2 (9): 7–14. doi:10.1145/368424.368427. S2CID 6740821.

- ↑ "Stanford University Oral History". Stanford University. Retrieved May 30, 2013.

- ↑ Donald Knuth (1972). "George Forsythe and the Development of Computer Science". Comms. ACM. Archived October 20, 2013, at the Wayback Machine

- ↑ Matti Tedre (2006). "The Development of Computer Science: A Sociocultural Perspective" (PDF). p. 260. Retrieved December 12, 2014.

- ↑ Peter Naur (1966). "The science of datalogy". Communications of the ACM. 9 (7): 485. doi:10.1145/365719.366510. S2CID 47558402.

- ↑ Weiss, E.A.; Corley, Henry P.T. "Letters to the editor". Communications of the ACM. 1 (4): 6. doi:10.1145/368796.368802. S2CID 5379449.

- ↑ Communications of the ACM 2(1):p.4

- ↑ IEEE Computer 28(12): p.136

- ↑ P. Mounier-Kuhn, L'Informatique en France, de la seconde guerre mondiale au Plan Calcul. L'émergence d'une science, Paris, PUPS, 2010, ch. 3 & 4.

- ↑ Groth, Dennis P. (February 2010). "Why an Informatics Degree?". Communications of the ACM. Cacm.acm.org.

- ↑ Tedre, M. (2011). "Computing as a Science: A Survey of Competing Viewpoints". Minds and Machines. 21 (3): 361–387. doi:10.1007/s11023-011-9240-4. S2CID 14263916.

- ↑ Parnas, D.L. (1998). "Software engineering programmes are not computer science programmes". Annals of Software Engineering. 6: 19–37. doi:10.1023/A:1018949113292. S2CID 35786237., p. 19: "Rather than treat software engineering as a subfield of computer science, I treat it as an element of the set, Civil Engineering, Mechanical Engineering, Chemical Engineering, Electrical Engineering, […]"

- ↑ Wegner, P. (October 13–15, 1976). Research paradigms in computer science—Proceedings of the 2nd international Conference on Software Engineering. San Francisco, California, United States: IEEE Computer Society Press, Los Alamitos, CA.

- ↑ Denning, P.J.; Comer, D.E.; Gries, D.; Mulder, M.C.; Tucker, A.; Turner, A.J.; Young, P.R. (January 1989). "Computing as a discipline". Communications of the ACM. 32: 9–23. doi:10.1145/63238.63239. S2CID 723103.

- ↑ Eden, A.H. (2007). "Three Paradigms of Computer Science" (PDF). Minds and Machines. 17 (2): 135–167. CiteSeerX 10.1.1.304.7763. doi:10.1007/s11023-007-9060-8. S2CID 3023076. Archived from the original (PDF) on February 15, 2016.

- ↑ Turner, Raymond; Angius, Nicola (2019). "The Philosophy of Computer Science". In Zalta, Edward N. (ed.). The Stanford Encyclopedia of Philosophy.

- ↑ 35.0 35.1 "Computer Science as a Profession". Computing Sciences Accreditation Board. May 28, 1997. Archived from the original on June 17, 2008. Retrieved 23 May 2010.

- ↑ Committee on the Fundamentals of Computer Science: Challenges and Opportunities, National Research Council (2004). Computer Science: Reflections on the Field, Reflections from the Field. National Academies Press. ISBN 978-0-309-09301-9.

- ↑ "CSAB Leading Computer Education". CSAB. August 3, 2011. Retrieved 19 November 2011.

- ↑ Clay Mathematics Institute P = NP Archived October 14, 2013, at the Wayback Machine

- ↑ P. Collins, Graham (October 14, 2002). "Claude E. Shannon: Founder of Information Theory". Scientific American. Retrieved December 12, 2014.

- ↑ Van-Nam Huynh; Vladik Kreinovich; Songsak Sriboonchitta; 2012. Uncertainty Analysis in Econometrics with Applications. Springer Science & Business Media. p. 63. ISBN 978-3-642-35443-4.

- ↑ Phillip A. Laplante, 2010. Encyclopedia of Software Engineering Three-Volume Set (Print). CRC Press. p. 309. ISBN 978-1-351-24926-3.

- ↑ A. Thisted, Ronald (April 7, 1997). "Computer Architecture" (PDF). The University of Chicago.

- ↑ Jiacun Wang, 2017. Real-Time Embedded Systems. Wiley. p. 12. ISBN 978-1-119-42070-5.

- ↑ Gordana Dodig-Crnkovic; Raffaela Giovagnoli; 2013. Computing Nature: Turing Centenary Perspective. Springer Science & Business Media. p. 247. ISBN 978-3-642-37225-4.

- ↑ Simon Elias Bibri; 2018. Smart Sustainable Cities of the Future: The Untapped Potential of Big Data Analytics and Context-Aware Computing for Advancing Sustainability. Springer. p. 74. ISBN 978-3-319-73981-6.

- ↑ Muhammad H. Rashid, 2016. SPICE for Power Electronics and Electric Power. CRC Press. p. 6. ISBN 978-1-4398-6047-2.

- ↑ Rapaport, William J. (20 September 2013). "What Is Computation?". State University of New York at Buffalo.

- ↑ B. Jack Copeland, 2012. Alan Turing's Electronic Brain: The Struggle to Build the ACE, the World's Fastest Computer. OUP Oxford. p. 107. ISBN 978-0-19-960915-4.

- ↑ Charles W. Herbert, 2010. An Introduction to Programming Using Alice 2.2. Cengage Learning. p. 122. ISBN 0-538-47866-7.

- ↑ "A Brief History of Computer Science | World Science Festival". World Science Festival. Retrieved 2018-03-20.

Cite error: <ref> tags exist for a group named "note", but no corresponding <references group="note"/> tag was found